Securing Data Science Systems: Why Small Changes Can Have Big Consequences

- Maryam Ziaee

- 4 days ago

- 3 min read

Updated: 22 hours ago

Data science and artificial intelligence are transforming how we conduct research and make decisions.

From academic environments to real-world systems, we rely heavily on data-driven models.

But there is a fundamental question we often ignore:

Can we truly trust these systems?

The Hidden Problem: Small Changes, Big Impact

One of the most overlooked challenges in AI is its sensitivity to subtle changes in input data, known as perturbations.

These changes are often invisible to humans—but not to machines.

A Simple Demonstration

Human vs AI Interpretation

While the difference between the original and perturbed image is barely noticeable to humans, AI systems may interpret them very differently.

Human perception: The image appears unchanged

AI prediction: The output may shift significantly

This contrast highlights a fundamental challenge:

AI systems rely on learned patterns, not true understanding—making them vulnerable to subtle, adversarial changes.

Below is a simple demonstration showing how a very small change can affect data:

Original Image

Perturbed Image

Noise Visualization

Explanation

We added a very small amount of noise to the image.

For humans → the image looks almost identical

For AI → this small change can significantly alter the output

This shows how sensitive AI systems can be.

Human Perception

Sees: No meaningful difference

Interpretation: “This is the same image.”

Reason: Humans are robust to small noise

AI Model Prediction

Sees: Changed pattern

Interpretation: Different classification

Reason: Sensitive to small perturbations

What is Adversarial Weakness Analysis?

Adversarial Weakness Analysis (AWA) is the process of identifying how small, intentional changes can mislead AI systems.

In simple terms:

It helps us understand:

Where models fail

How can they be tricked

How to make them more robust

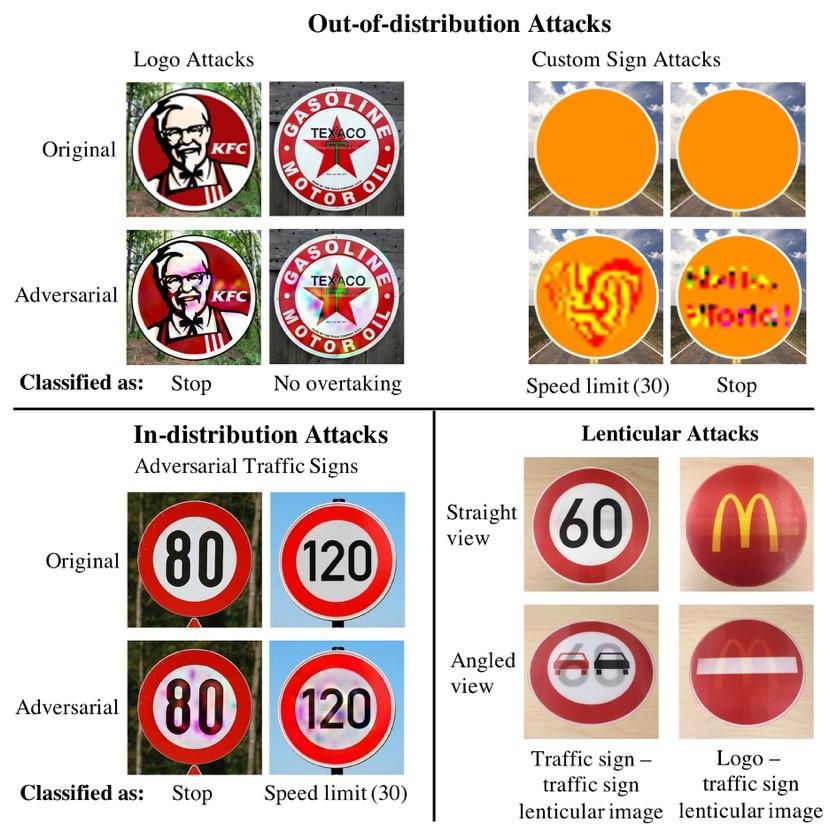

Real-World Examples

Image Classification

A tiny change in pixels can completely change the model’s prediction.

Text & Security

Example:

Humans understand it—but systems can be misled.

This example also highlights an important question:

If such small changes are often difficult for humans to detect, can AI systems perform better?

The answer is not straightforward.

While AI can be trained to detect subtle anomalies at scale, many standard models are not designed for security and may themselves be vulnerable to these changes.

In fact, without proper safeguards, AI systems can be even more sensitive to small perturbations than humans.

This reinforces a critical point: security cannot be assumed—it must be intentionally designed into data science systems from the beginning.

Physical World Attacks

Small stickers on a stop sign can cause misclassification.

⚠️ Why This Matters

These vulnerabilities can lead to:

Incorrect predictions

Misleading research results

Security risks in real-world systems

And the problem is:

Most systems are built for performance—not security.

A Security-First Approach

The core idea of this work is:

Security should be built into data science systems from the beginning—not added later.

This includes:

Secure data pipelines

Robust models

Risk-aware design

Governance and best practices

Trusted AI Development

To build trustworthy systems, we must focus on:

Robustness → resisting small changes

Explainability → understanding decisions

Fairness → avoiding bias

Humans vs AI

Interestingly:

Humans can understand noisy or imperfect data

AI systems often fail under the same conditions

This gap highlights the need for better system design.

Final Takeaway

Even the smallest changes in data can lead to major consequences.

If we cannot trust the data or the model, we cannot trust the results.

This is a simple demonstration of how small changes can affect AI systems

Comments